Abstract

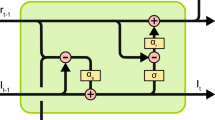

Recent work has shown that convolutional neural networks (CNNs) trained on image recognition tasks can serve as valuable models for predicting neural responses in primate visual cortex. However, these models typically require biologically infeasible levels of labelled training data, so this similarity must at least arise via different paths. In addition, most popular CNNs are solely feedforward, lacking a notion of time and recurrence, whereas neurons in visual cortex produce complex time-varying responses, even to static inputs. Towards addressing these inconsistencies with biology, here we study the emergent properties of a recurrent generative network that is trained to predict future video frames in a self-supervised manner. Remarkably, the resulting model is able to capture a wide variety of seemingly disparate phenomena observed in visual cortex, ranging from single-unit response dynamics to complex perceptual motion illusions, even when subjected to highly impoverished stimuli. These results suggest potentially deep connections between recurrent predictive neural network models and computations in the brain, providing new leads that can enrich both fields.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$29.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 digital issues and online access to articles

$119.00 per year

only $9.92 per issue

Buy this article

- Purchase on Springer Link

- Instant access to full article PDF

Prices may be subject to local taxes which are calculated during checkout

Similar content being viewed by others

Data availability

The primary dataset used in this work is the KITTI Dataset13, which can be obtained at http://www.cvlibs.net/datasets/kitti/raw_data.php. All other data may be obtained upon request to the authors.

Code availability

Code for the PredNet model is available at https://github.com/coxlab/prednet. All other code may be obtained upon request to the authors.

References

Yamins, D. L. K. et al. Performance-optimized hierarchical models predict neural responses in higher visual cortex. Proc. Natl Acad. Sci. USA 111, 8619–8624 (2014).

Yamins, D. L. K. & DiCarlo, J. J. Using goal-driven deep learning models to understand sensory cortex. Nat. Neurosci. 19, 356–365 (2016).

Khaligh-Razavi, S.-M. & Kriegeskorte, N. Deep supervised, but not unsupervised, models may explain IT cortical representation. PLoS Comput. Biol. 10, 1–29 (2014).

Nayebi, A. et al. Task-driven convolutional recurrent models of the visual system. In Advances in Neural Information Processing Systems 5290–5301 (NeurIPS, 2018).

Deng, J. et al. ImageNet: a large-scale hierarchical image database. In IEEE Conference on Computer Vision and Pattern Recognition 248–255 (IEEE, 2009).

Tang, H. et al. Recurrent computations for visual pattern completion. Proc. Natl Acad. Sci. USA 115, 8835–8840 (2018).

Kar, K., Kubilius, J., Schmidt, K., Issa, E. B. & DiCarlo, J. J. Evidence that recurrent circuits are critical to the ventral stream’s execution of core object recognition behavior. Nat. Neurosci. 22, 974–983 (2019).

Lotter, W., Kreiman, G. & Cox, D. D. Deep predictive coding networks for video prediction and unsupervised learning. International Conference on Learning Representations (ICLR, 2017).

Rao, R. P. N. & Ballard, D. H. Predictive coding in the visual cortex: a functional interpretation of some extra-classical receptive-field effects. Nat. Neurosci. 2, 79–87 (1999).

Friston, K. A theory of cortical responses. Philos. Trans. R Soc. Lond. B Biol. Sci. 360, 815–836 (2005).

Spratling, M. W. Unsupervised learning of generative and discriminative weights encoding elementary image components in a predictive coding model of cortical function. Neural Comput. 24, 60–103 (2012).

Wen, H. et al. Deep predictive coding network for object recognition. Proc. 35th International Conference on Machine Learning 80, 5266–5275 (2018).

Geiger, A., Lenz, P., Stiller, C. & Urtasun, R. Vision meets robotics: the KITTI dataset. Int. J. Robot. Res. 32, 1231–1237 (2013).

Softky, W. R. Unsupervised pixel-prediction. In Advances in Neural Information Processing Systems 809–815 (NeurIPS, 1996).

Lotter, W., Kreiman, G. & Cox, D. Unsupervised learning of visual structure using predictive generative networks. International Conference on Learning Representations (ICLR, 2016).

Mathieu, M., Couprie, C. & LeCun, Y. Deep multi-scale video prediction beyond mean square error. International Conference on Learning Representations (ICLR, 2016).

Srivastava, N., Mansimov, E. & Salakhutdinov, R. Unsupervised learning of video representations using LSTMs. Proc. 32nd International Conference on Machine Learning 37, 843–852 (2015).

Dosovitskiy, A. & Koltun, V. Learning to act by predicting the future. International Conference on Learning Representations (ICLR, 2017).

Finn, C., Goodfellow, I. J. & Levine, S. Unsupervised learning for physical interaction through video prediction. In Advances in Neural Information Processing Systems 64–72 (NeurIPS, 2016).

Elman, J. L. Finding structure in time. Cogn. Sci. 14, 179–211 (1990).

Hawkins, J. & Blakeslee, S. On Intelligence (Times Books, 2004).

Luo, Z., Peng, B., Huang, D.-A., Alahi, A. & Fei-Fei, L. Unsupervised learning of long-term motion dynamics for videos. In The IEEE Conference on Computer Vision and Pattern Recognition 7101–7110 (IEEE, 2017).

Lee, A. X. et al. Stochastic adversarial video prediction. Preprint at https://arxiv.org/pdf/1804.01523.pdf (2018).

Villegas, R. et al. High fidelity video prediction with large stochastic recurrent neural networks. In Advances in Neural Information Processing Systems 81–91 (NeurIPS, 2019).

Villegas, R., Yang, J., Hong, S., Lin, X. & Lee, H. Learning to generate long-term future via hierarchical prediction. International Conference on Learning Representations (ICLR, 2017).

Denton, E. & Fergus, R. Stochastic video generation with a learned prior. In Proceedings of 35th International Conference on Machine Learning 1174–1183 (ICML, 2018).

Babaeizadeh, M., Finn, C., Erhan, D., Campbell, R. H. & Levine, S. Stochastic variational video prediction. International Conference on Learning Representations (ICLR, 2018).

Wang, Y., Gao, Z., Long, M., Wang, J. & Yu, P. S. PredRNN++: towards a resolution of the deep-in-time dilemma in spatiotemporal predictive learning. Proc. 35th International Conference on Machine Learning 80, 5123–5132 (2018).

Finn, C. & Levine, S. Deep visual foresight for planning robot motion. In International Conference on Robotics and Automation 2786–2793 (IEEE, 2017).

Hsieh, J.-T., Liu, B., Huang, D.-A., Fei-Fei, L. F. & Niebles, J. C. Learning to decompose and disentangle representations for video prediction. In Advances in Neural Information Processing Systems 517–526 (NeurIPS, 2018).

Kalchbrenner, N. et al. Video pixel networks. Proc. 34th International Conference on Machine Learning 70, 1771–1779 (2017).

Qiu, J., Huang, G. & Lee, T. S. Visual sequence learning in hierarchical prediction networks and primate visual cortex. In Advances in Neural Information Processing Systems 2662–2673 (NeurIPS, 2019).

Wang, Y. et al. Eidetic 3D LSTM: a model for video prediction and beyond. International Conference on Learning Representations (ICLR, 2019).

Wang, Y., Long, M., Wang, J., Gao, Z. & Yu, P. S. PredRNN: recurrent neural networks for predictive learning using spatiotemporal LSTMs. In Advances in Neural Information Processing Systems 879–888 (NeurIPS, 2017).

Liu, W., Luo, W., Lian, D. & Gao, S. Future frame prediction for anomaly detection - a new baseline. In The IEEE Conference on Computer Vision and Pattern Recognition 6536–6545 (IEEE, 2018).

Tandiya, N., Jauhar, A., Marojevic, V. & Reed, J. H. Deep predictive coding neural network for RF anomaly detection in wireless networks. In 2018 IEEE International Conference on Communications Workshops (IEEE, 2018).

Ebert, F. et al. Visual Foresight: Model-based deep reinforcement learning for vision-based robotic control. Preprint at https://arxiv.org/pdf/1812.00568.pdf (2018).

Rao, R. P. N. & Sejnowski, T. J. Predictive sequence learning in recurrent neocortical circuits. In Advances in Neural Information Processing Systems 164–170 (NeurIPS, 2000).

Summerfield, C. et al. Predictive codes for forthcoming perception in the frontal cortex. Science 314, 1311–1314 (2006).

Bastos, A. M. et al. Canonical microcircuits for predictive coding. Neuron 76, 695–711 (2012).

Kanai, R., Komura, Y., Shipp, S. & Friston, K. Cerebral hierarchies: predictive processing, precision and the pulvinar. Philos. Trans. R. Soc. Lond. BBiol. Sci 370, 20140169 (2015).

Srinivasan, M. V., Laughlin, S. B. & Dubs, A. Predictive coding: a fresh view of inhibition in the retina. Proc. R. Soc. Lond. B Biol. Sci. 216, 427–459 (1982).

Atick, J. J. Could information theory provide an ecological theory of sensory processing. Network: Computation in neural systems 22, 4–44 (1992).

Murray, S. O., Kersten, D., Olshausen, B. A., Schrater, P. & Woods, D. L. Shape perception reduces activity in human primary visual cortex. Proc. Natl Acad. Sci. USA 99, 15164–15169 (2002).

Spratling, M. W. Predictive coding as a model of response properties in cortical area V1. J. Neurosci. 30, 3531–3543 (2010).

Jehee, J. F. M. & Ballard, D. H. Predictive feedback can account for biphasic responses in the lateral geniculate nucleus. PLoS Comput. Biol. 5, 1–10 (2009).

Kumar, S. et al. Predictive coding and pitch processing in the auditory cortex. J. Cogn. Neurosci. 23, 3084–3094 (2011).

Zelano, C., Mohanty, A. & Gottfried, J. A. Olfactory predictive codes and stimulus templates in piriform cortex. Neuron 72, 178–187 (2011).

Mumford, D. On the computational architecture of the neocortex: II The role of cortico-cortical loops. Biol. Cybern. 66, 241–251 (1992).

Hubel, D. H. & Wiesel, T. N. Receptive fields and functional architecture of monkey striate cortex. J. Physiol. 195, 215–243 (1968).

Nassi, J. J., Lomber, S. G. & Born, R. T. Corticocortical feedback contributes to surround suppression in V1 of the alert primate. J. Neurosci. 33, 8504–8517 (2013).

Schmolesky, M. T. et al. Signal timing across the macaque visual system. J. Neurophysiol. 79, 3272–3278 (1998).

Hung, C. P., Kreiman, G., Poggio, T. & DiCarlo, J. J. Fast readout of object identity from macaque inferior temporal cortex. Science 310, 863–866 (2005).

Meyer, T. & Olson, C. R. Statistical learning of visual transitions in monkey inferotemporal cortex. Proc. Natl Acad. Sci. USA 108, 19401–19406 (2011).

Watanabe, E., Kitaoka, A., Sakamoto, K., Yasugi, M. & Tanaka, K. Illusory motion reproduced by deep neural networks trained for prediction. Front. Psychol. 9, 345 (2018).

Kanizsa, G. Organization in Vision: Essays on Gestalt Perception (Praeger, 1979).

Lee, T. S. & Nguyen, M. Dynamics of subjective contour formation in the early visual cortex. Proc. Natl Acad. Sci. USA 98, 1907–1911 (2001).

Nijhawan, R. Motion extrapolation in catching. Nature 370, 256–257 (1994).

Mackay, D. M. Perceptual stability of a stroboscopically lit visual field containing self-luminous objects. Nature 181, 507–508 (1958).

Eagleman, D. M. & Sejnowski, T. J. Motion integration and postdiction in visual awareness. Science 287, 2036–2038 (2000).

Khoei, M. A., Masson, G. S. & Perrinet, L. U. The flash-lag effect as a motion-based predictive shift. PLoS Comput. Biol. 13, 1–31 (2017).

Hogendoorn, H. & Burkitt, A. N. Predictive coding with neural transmission delays: a real-time temporal alignment hypothesis. eNeuro 6, e0412–18.2019 (2019).

Wojtach, W. T., Sung, K., Truong, S. & Purves, D. An empirical explanation of the flash-lag effect. Proc. Natl Acad. Sci. USA 105, 16338–16343 (2008).

Zhu, M. & Rozell, C. J. Visual nonclassical receptive field effects emerge from sparse coding in a dynamical system. PLoS Comput. Biol. 9, 1–15 (2013).

Chalk, M., Marre, O. & Tkačik, G. Toward a unified theory of efficient, predictive and sparse coding. Proc. Natl Acad. Sci. USA 115, 186–191 (2018).

Singer, Y. et al. Sensory cortex is optimized for prediction of future input. eLife 7, e31557 (2018).

Hunsberger, E. & Eliasmith, C. Training spiking deep networks for neuromorphic hardware. Preprint at https://arxiv.org/pdf/1611.05141.pdf (2016).

Boerlin, M., Machens, C. K. & Denève, S. Predictive coding of dynamical variables in balanced spiking networks. PLoS Comput. Biol. 9, 1–16 (2013).

Maass, W. in Pulsed Neural Networks (eds Maass, W. & Bishop, C. M.) 55–85 (MIT Press, 1999).

Nøkland, A. Direct feedback alignment provides learning in deep neural networks, In Advances in Neural Information Processing Systems 1037–1045 (NeurIPS, 2016).

Lillicrap, T. P., Cownden, D., Tweed, D. B. & Akerman, C. J. Random synaptic feedback weights support error backpropagation for deep learning. Nat. Commun. 7, 13276 (2016).

Hochreiter, S. & Schmidhuber, J. Long short-term memory. Neural Comput. 9, 1735–1780 (1997).

Shi, X. et al. Convolutional LSTM network: a machine learning approach for precipitation nowcasting. In Advances in Neural Information Processing Systems 802–810 (NeurIPS, 2015).

Goodfellow, I. et al. Generative adversarial nets. In Advances in Neural Information Processing Systems 2672–2680 (NeurIPS, 2014).

Kingma, D. P. & Welling, M. Auto-encoding variational bayes. International Conference on Learning Representations (ICLR, 2014).

Rumelhart, D. E., Hinton, G. E. & Williams, R. J. Learning representations by back-propagating errors. Nature 323, 533–536 (1986).

Kingma, D. P. & Ba, J. Adam: a method for stochastic optimization. International Conference on Learning Representations (ICLR, 2015).

Adelson, E. H. & Bergen, J. R. Spatiotemporal energy models for the perception of motion. J. Opt. Soc. Am. A 2, 284–299 (1985).

McIntosh, L., Maheswaranathan, N., Nayebi, A., Ganguli, S. & Baccus, S. Deep learning models of the retinal response to natural scenes. In Advances in Neural Information Processing Systems 1369–1377 (NeurIPS, 2016).

Dura-Bernal, S., Wennekers, T. & Denham, S. L. Top-down feedback in an HMAX-like cortical model of object perception based on hierarchical bayesian networks and belief propagation. PLoS ONE 7, 1–25 (2012).

Krizhevsky, A., Sutskever, I. & Hinton, G. E. ImageNet classification with deep convolutional neural networks. In Advances in Neural Information Processing Systems, 1097–1105 (NeurIPS, 2012).

Simonyan, K. & Zisserman, A. Very deep convolutional networks for large-scale image recognition. International Conference on Learning Representations (ICLR, 2015).

He, K., Zhang, X., Ren, S. & Sun, J. Deep residual learning for image recognition. In The IEEE Conference on Computer Vision and Pattern Recognition 770–778 (IEEE, 2016).

Acknowledgements

This work was supported by IARPA (contract no. D16PC00002), the National Science Foundation (NSF IIS 1409097) and the Center for Brains, Minds and Machines (CBMM, NSF STC award CCF-1231216).

Author information

Authors and Affiliations

Contributions

W.L. and D.C. conceived the study. W.L. conceived the model and implemented the experiments and analysis. G.K. and D.C. supervised the study. All authors contributed to interpreting the results. All authors contributed to writing the manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Extended data

Extended Data Fig. 1 Length suppression analysis for A1 and R1 units.

The average (± s.e.m) response of A1 and R1 units and exemplars are shown (expanding upon Fig. 2 in main text). Red: Original network. Blue: Feedback weights from R2 to R1 set to zero. The average A1 response demonstrates length suppression, whereas the average R1 response does not show a strong effect, with some units overall showing length suppression (for example, unit 15 - bottom right panel) and other units showing an opposite effect (for example, unit 33 - bottom middle panel). The removal of feedback led to a significant decrease in length suppression in A1, with a mean (± s.e.m) decrease in percent length suppression (\({\mathrm{\% }}LS = 100 \ast \frac{r_{\mathrm{max}} - {r_{\mathrm{longest}\;\mathrm{bar}}}}{r_{\mathrm{max}}}\)) of 31±7% (p = 0.0004, Wilcoxon signed rank test, one-sided, z = 3.3). The R1 units exhibited a mean %LS decrease of 5±6% upon removal of feedback, which was not statistically significant (p = 0.18, z = 0.93).

Extended Data Fig. 2 Temporal dynamics in the A and R units in the PredNet.

The average response of A and R units to a set of naturalistic objects on a gray background, after training on the KITTI car-mounted camera dataset13 is shown (expanding upon Fig. 3 in the main text). The A and R layers seem to generally exhibit on/off dynamics, similar to the E layers. R1 also seems to have another mode in its response, specifically a ramp up between time steps 3 and 5 post image onset. The responses are grouped per layer and consist of an average across all the units (all filters and spatial locations) in a layer. The mean responses were then normalized between 0 and 1. Given the large number of units in each layer, the s.e.m. is O(1%) of the mean. Responses for layer 0, the pixel layer, are omitted because of their heavy dependence on the input pixels for the A and R layers. Note that, by notation in the network’s update rules, the input image reaches the R layers at a time step after the E and A layers.

Extended Data Fig. 3 Response differential between predicted and unpredicted sequences in the sequence learning experiment.

The percent increase of population peak response between predicted and unpredicted sequences is quantified for each PredNet layer. Positive values indicate a higher response for unpredicted sequences. *p < 0.05, **p < 0.005 (paired t-test, one-sided).

Extended Data Fig. 4 Illusory contours responses for A and R units in the PredNet.

The mean ± s.e.m. is shown (expanding upon Fig. 5 in the main text). Averages are computed across filter channels at the central receptive field.

Extended Data Fig. 5 Quantification of illusory responsiveness in the illusory contours experiment.

Units in the monkey recordings of Lee and Nguyen57 are compared to units in the PredNet. We follow Lee and Nguyen57 in calculating the following two measures for each unit: \(IC_a = \frac{{R_i - R_a}}{{R_i + R_a}}\) and \(IC_r = \frac{{R_i - R_r}}{{R_i + R_r}}\), where Ri is the response to the illusory contour (sum over stimulus duration), Ra is the response to amodal stimuli, and Rr is the response to the rotated image. For the PredNet, these indices were calculated separately for each unit (at the central receptive field) with a non-uniform response. Positive values, indicating preferences to the illusion, were observed for all subgroups. Mean ± s.e.m.; *p < 0.05 (t-test, one-sided).

Extended Data Fig. 6 Additional predictions by the PredNet model in the flash lag experiment.

The images shown consist of next-frame predictions by the PredNet model after four consecutive appearances of the outer bar. The model was trained on the KITTI car-mounted camera dataset13.

Extended Data Fig. 7 Comparison of the PredNet to prior models.

The models under comparison are a (non-exhaustive) list of prior models that have been used to probe the phenomena explored here. The top section indicates if a given model (column) exhibits each phenomenon (row). The bottom section considers various learning aspects of the models. From left to right, the models considered correspond to the works of Rao and Ballard (1999)9, Adelson and Bergen (1985)78, McIntosh et al. (2016)79, Spratling (2010)45, Jehee and Ballard (2009)46, and Dura-Bernal et al. (2012)80. Additionally, traditional deep CNNs are considered (for example AlexNet81, VGGNet82, ResNet83). The PredNet control (second column from right) refers to the model in Extended Data Figures 9 and 10.

Extended Data Fig. 8 Comparison of PredNet predictions in the flash-lag illusion experiment to psychophysical estimates.

The psychophysical estimates come from Nijhawan, 199458. With the frame rate of 10 Hz used to train the PredNet as a reference, the average angular difference between the inner and outer bars in the PredNet predictions was quantified for various rotation speeds. The results are compared to the perceptual estimates obtained using two human subjects by Nijhawan58. Mean and standard deviation are shown. For rotation speeds up to and including 25 rotations per minute (RPM), the PredNet estimates align well with the psychophysical results. At 35 RPM, the PredNet predictions become noisy and inconsistent, as evidenced by the high standard deviation.

Extended Data Fig. 9 PredNet control model lacking explicit penalization of activity in “error units”.

An additional convolutional block (\(\hat A_0^{frame}\)) is added that generates the next-frame prediction given input from R0. The predicted frame is used in direct L1 loss, with the removal of the activity of the E units from the training loss altogether. Thus, in this control model, the E units are unconstrained and there is no explicit encouragement of activity minimization in the network.

Extended Data Fig. 10 Results of control model with the removal of explicit minimization of “error” activity in the PredNet.

Overall, the control model less faithfully reproduces the neural phenomena presented here. a) The control network E1 units exhibit enhanced length suppression when feedback is removed (opposite of the effect in biology and the original PredNet). b) The responses in the control network still peak upon image onset and offset, however the decay in activity after peak is non-monotonic in several layers and less dramatic overall than the results shown in Fig. 3. As opposed to the 20–49% decrease in response after image onset peak in the original PredNet and the 44% decrease in the Hung et al.53 macaque IT data, the control network exhibited a 5% (E1) to 30% (E2) decrease. c) Response of the control network E3 layer in the sequence pairing experiment. The unpredicted images actually elicit a higher response than the first image in the sequence and the predicted images hardly elicit any response, both effects which are qualitatively different than the macaque IT data from Meyer and Olson54 and the original PredNet. d,e) The average E1 response in the control network demonstrates a decrease in activity upon presentation of the illusory contour.

Rights and permissions

About this article

Cite this article

Lotter, W., Kreiman, G. & Cox, D. A neural network trained for prediction mimics diverse features of biological neurons and perception. Nat Mach Intell 2, 210–219 (2020). https://doi.org/10.1038/s42256-020-0170-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1038/s42256-020-0170-9

This article is cited by

-

The neuroconnectionist research programme

Nature Reviews Neuroscience (2023)

-

Mixed Evidence for Gestalt Grouping in Deep Neural Networks

Computational Brain & Behavior (2023)

-

A time-causal and time-recursive scale-covariant scale-space representation of temporal signals and past time

Biological Cybernetics (2023)

-

Desynchronization and energy diversity between neurons

Nonlinear Dynamics (2023)

-

Integration of artificial intelligence in sustainable manufacturing: current status and future opportunities

Operations Management Research (2023)