Abstract

A position and orientation measurement method is investigated by adopting a camera calibrated by the projection geometry of the skew-symmetric Plücker matrices of 3D lines. The relationship between the Plücker matrices of the dual 3D lines and the 2D projective lines is provided in two vertical world coordinate planes. The transform matrix is generated from the projections of the 3D lines. The differences between the coordinates of the reprojective lines and the coordinates of extracted lines are employed to verify the calibration validity. Moreover, the differences between the standard movement distance of the target and the measurement distance are also presented to compare the calibration accuracy of the 3D line to 2D line method and the point-based method. Furthermore, we also explore the noise immunity of the two methods by adding Gaussian noises. Finally, an example to measure the position and orientation of a cart is performed as an application case of this method. The results are tabled for the reproduction by the readers. The results demonstrate that the line to line method contributes higher calibration accuracy and better noise immunity. The position and orientation measurement adopting the line to line method is valid for the future applications.

Similar content being viewed by others

Introduction

Camera is an important measurement instrument as it bridges the scales from the 3D space to the 2D space1,2. Camera calibration is the bridge to estimate the transformation matrix of the camera from a captured photograph3. Consequently, the camera calibration is widely studied in the vision measurement and optical inspection, such as object reconstruction4, computed tomography5, pose estimation6, and robot arm positioning7. As the transformation matrix of the camera contains the position and orientation information of a measured object in a captured image, we focus on the position and orientation measurement technique using a calibrated camera by the projection geometry of Plücker matrices of three-dimensional lines.

Various methodologies have been explored to solve the camera calibration problem. These technologies are approximately classified by the methods based on 3D, 2D, and 1D calibration targets. The 1D target is firstly described by Zhang8. The target should rotate to a fixed point in the calibration. Qi9 introduced a calibration method using the 1D object with three or more markers. The constraint equations of the camera parameters are provided by the rotation around one marker which is moving in a plane. 1D calibration method provides simple structure and easy operation. However, the accuracy of 1D calibration methods is generally low due to the insufficient information on the 1D bar. Consequently, many calibration methods are mainly based on the 3D or 2D targets. To promote the camera calibration accuracy, Ricolfeviala10 proposes an optimal calibration method based on several images of a 2D pattern. The optimal conditions are proposed to resolve the calibration process accurately. Bethea11 develops a camera calibration technique by employing three parallel calibration planes and two cameras. Heikkila12 presents an approach to calibrate the camera by circular control points identified on two perpendicular planes. In this paper, 3D target is chosen to calibrate the camera due to the high calibration accuracy and the sufficient information of the calibration target.

Various patterns are employed on the calibration objects, such as points, circles, lines, and color patterns. Most of the calibrations adopt the feature points of the target13,14. Point-based calibration method achieves the advantages of high speed and easy operation. However, it is easily affected by the image noises. The circle-pattern-based calibration technique also attracts many investigators due to the high noise immunity. Xue15 describes a method using concentric circles and wedge grating for camera calibration. An improved calibration method is proposed by Rui16 to increase the camera and projector calibration accuracy simultaneously by detecting the edge of the circles. Xu17 investigates a camera calibration method using the perpendicularity of 2D lines in the target observations. A study is presented by Yilmaztürk18 for full automatic calibration of color digital cameras using color targets. Nevertheless, the color distortion is an unavoidable element in the process of capturing the color photos. Although the circle-pattern-based calibrations contribute high noise immunity, the method shows low efficiency due to the low speed of extracting the circles. The line-pattern-based calibration method is selected in this paper considering the moderate speed of extracting lines and good noises immunity. The original line-pattern-based calibration method employs the geometry relationship between the 2D line on a planar calibration target and the 2D projective line in the image. The essence of the method above is a 2D line to 2D line homography. The 3D calibration target is chosen to calibrate the camera owing to the high accuracy. However, it is difficult to build the homography from the 3D line on the target to the 2D line in the image as the coordinates of a 3D line are generally indicated by the equations of two planes. Therefore, there is a lack of the calibration method adopting the projective geometry from 3D line to 2D projective line.

In the paper, the position and orientation of an object are obtained from the captured image of a calibrated camera. Therefore, we firstly explore the camera calibration method adopting the projection geometry from the Plücker matrices of 3D lines to the 2D projective lines. A projective line in the image is determined by the corresponding 3D line on the calibration target and the projective plane. The transformation matrix of the camera is generated from the geometrical relationship between 3D lines and 2D projective lines. The 3D line to 2D line method is compared with the point-based method to verify the measurement validity, the measurement accuracy and the noise immunity. Then, a cart with the 3D target is chosen as the application example. The transformation matrix of the camera is decomposed to the rotation matrix, translation vector and the intrinsic matrix. The position and orientation of the measured cart is generated from the translation vector and the rotation matrix, and verified by the absolute and relative errors of the reconstructed displacements.

Results

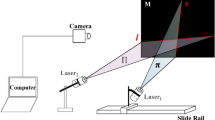

According to the 3D line to 2D line method, the transformation matrix P is generated from n 3D lines Li and n 2D projective lines li. The coordinates of the 2D projective lines are extracted by the Hough transform19. The recognition results of the lines are shown in Fig. 1. The results indicate the Hough transform can extract the lines exactly.

The differences between the coordinates of the reprojective lines and the line coordinates extracting by the Hough transform are employed to evaluate the accuracy of the 3D line to 2D line method. The comparison of the average logarithmic errors adopting the 3D line to 2D line method and the point-based method20 is shown in Fig. 2. The image resolution is 1024 × 768. Moreover, in order to explore the relationship between the errors and the movement distance, the calibration board is moved by 10 mm, 20 mm, 30 mm, and 40 mm respectively. In the first group of experiments, the images are captured at the measurement distance of 1000 mm. The mean of average logarithmic errors using the line to line method are 1.11 × 10−4, 1.67 × 10−4, 2.78 × 10−4, and 4.09 × 10−4 corresponding to the movement distances of 10 mm, 20 mm, 30 mm, and 40 mm. The mean of average logarithmic errors of the point-based method is 3.19 × 10−4, 5.11 × 10−4, 8.55 × 10−4, and 1.41 × 10−3 corresponding to the movement distances of 10 mm, 20 mm, 30 mm, and 40 mm.

Besides, the second group of experiments are performed at a smaller measurement distance. The images are observed by the camera at the measurement distances of 800 mm. Similar to first group of experiments, the 3D line to 2D line method is compared with the point-based method to verify the measurement accuracy in Fig. 3. The mean of average logarithmic errors using the line to line method are 1.01 × 10−4, 1.61 × 10−4, 2.50 × 10−4, and 3.99 × 10−4 corresponding to the movement distances of 10 mm, 20 mm, 30 mm, and 40 mm. The mean of average logarithmic errors adopting point-based method are 3.12 × 10−4, 4.81 × 10−4, 8.08 × 10−4, and 1.34 × 10−3 corresponding to the movement distance of 10 mm, 20 mm, 30 mm, and 40 mm. The results of the two groups of experiments both show that the errors increase with the increasing movement distance. Moreover, the errors of the 3D line to 2D line method are all smaller than the point-based method at the movement distances. The results indicate that the line to line method provides higher calibration accuracy. Furthermore, the errors of the images at the distance of 800 mm are smaller than the errors of the images at the distance of 1000 mm. The two methods achieve higher measurement accuracy in the small measurement distance.

Furthermore, three levels of Gaussian noises are added to study the effects of the noises. The 3D line to 2D line calibration method is also compared with the point-based method in the two groups of experiments. The measurement errors are evaluated by

where L′ is the reconstructed movement distance of the calibration board from the first place to the next place. L is the standard movement distance. L′ is generated from P. The standard distances are 10 mm, 20 mm, 30 mm, and 40 mm, respectively. We perform 20 experiments at the standard distances. The results of two groups of experiments are shown in Figs 4 and 5, respectively. In the first group of experiments, the means of ΔL adopting the 3D line to 2D line method without noises are 0.26 mm, 0.43 mm, 0.84 mm, and 1.35 mm when the movement distances are 10 mm, 20 mm, 30 mm, and 40 mm. The corresponding errors of the point-based method are 0.36 mm, 0.72 mm, 1.03 mm, and 1.54 mm, respectively. The mean errors using the point-based method are evidently bigger than the 3D line to 2D line method. When the noise level is 0.0001, the mean errors adopting the line to line method are 0.31 mm, 0.56 mm, 0.99 mm, and 1.51 mm with respect to the movement distances of 10 mm, 20 mm, 30 mm, and 40 mm. The mean errors of the point-based method are 0.42 mm, 0.80 mm, 1.14 mm, and 1.70 mm. The mean errors of the line to line method under the noise of 0.005 are 0.35 mm, 0.55 mm, 0.98 mm, and 1.63 mm when the movement distances are 10 mm, 20 mm, 30 mm, and 40 mm, respectively. The mean errors of the point-based method under the noise level of 0.0005 are 0.44 mm, 0.84 mm, 1.18 mm, and 1.79 mm, respectively. The mean errors of the line to line method under the noise of 0.01 are 0.39 mm, 0.64 mm, 1.16 mm and 1.64 mm as the movement distance increases from 10 mm to 40 mm. The mean errors using the point-based method are 0.46 mm, 0.92 mm, 1.24 mm, and 1.95 mm.

The second group of experiments are carried out in the measurement distance of 800 mm. The mean ΔL of the 3D line to 2D line method without noises are 0.14 mm, 0.49 mm, 0.63 mm, and 1.23 mm with the movement distances of 10 mm, 20 mm, 30 mm, and 40 mm. The related mean errors of the point-based method are 0.28 mm, 0.68 mm, 0.90 mm, and 1.43 mm, respectively. The mean errors of the line to line method are smaller than the point-based method. When the 0.0001 noise is added, the mean errors of the 3D line to 2D line method are 0.19 mm, 0.59 mm, 0.77 mm, and 1.38 mm corresponding to the movement distances of 10 mm, 20 mm, 30 mm, and 40 mm. The mean errors of the point-based method are 0.34 mm, 0.76 mm, 1.00 mm, and 1.55 mm. The mean errors of the line to line method under the noise level of 0.005 are 0.21 mm, 0.65 mm, 0.84 mm, and 1.46 mm when the movement distance are 10 mm, 20 mm, 30 mm, and 40 mm, respectively. The mean errors of the point-based method under the noise of 0.0005 are 0.37 mm, 0.85 mm, 1.07 mm, and 1.67 mm when the movement distances are 10 mm, 20 mm, 30 mm, and 40 mm, respectively. The mean errors of the 3D line to 2D line method under the noise level of 0.01 are 0.25 mm, 0.67 mm, 0.88 mm and 1.65 mm as the movement distance grows from 10 mm to 40 mm. The mean errors using the point-based method are 0.44 mm, 0.95 mm, 1.11 mm, and 1.77 mm, respectively.

In order to explain the application on how the technique can be used, a clear example is provided to measure the position and orientation of a cart. The details of the example are illustrated in Fig. 6, in which a cart is attached by a 3D target on the top and translated with the displacements of 10 mm, 20 mm, 30 mm and 40 mm, respectively.

The positions tx, ty, tz and orientations α, β, γ of the cart about the o-x, o-y, o-z axes of the camera coordinate system in 20 different places are shown in Fig. 7(a–f). As the orientations α, β, γ are stable in the three angles in Fig. 7(a–c), the cart is moved along a straight line. According to the position data of the cart in Fig. 7(d–f), the displacement of the cart can be solved by the norm of the difference between the translation vector at the first place and the translation vector at the second place in the camera coordinate system. The absolute and relative errors of the reconstructed displacements in Fig. 7(g–h) are considered as the indicators to verify the measurement results of the cart. The measurement results are listed in Table 1.

(a–c) Are the rotation angles α, β, γ about the o-x, o-y, o-z axes of the camera coordinate system. (d–f) Are the translations tx, ty, tz along the o-x, o-y, o-z axes of the camera coordinate system. (g) The absolute errors of the reconstructed displacements. (h) The relative errors of the reconstructed displacements.

As the movement displacement is 10 mm, the maximums of translations tx, ty, tz are −778.28 mm, 90.41 mm and 1121.14 mm, respectively. The minimums of translations tx, ty, tz are −778.64 mm, 89.98 mm and 1120.76 mm, respectively. The maximums of rotation angles α, β, γ are 24.92°, 44.34° and 52.86°, respectively. The minimums of rotation angles α, β, γ are 24.54°, 44.05°, and 52.45°, respectively. The means of measurement errors ΔL and relative errors ΔL/d are 0.18 mm and 1.85%, respectively. When the movement displacement increases to 40 mm, the maximal translations tx, ty, tz are −745.61 mm, 91.17 mm and 1123.78 mm, respectively. The minimal translations tx, ty, tz are −749.82 mm, 88.27 mm and 1120.13 mm, respectively. The maximal rotation angles α, β, γ are 26.77°, 46.37° and 55.43°, respectively. The minimal rotation angles α, β, γ are 23.06°, 42.99°, and 51.55°, respectively. The means of measurement errors and relative errors grow to 1.78 mm and 4.45%, respectively.

According to the above analysis and data in Table 1, since the translations ty, tz do not vary obviously, the o-x direction of the translation tx is the major movement direction. Moreover, the rotation angles about the three axes vary a little due to the cart is moved by a straight line. The mean of measurement errors increases with the rising movement displacement. The relative error also increases as the displacement is on the rise. Finally, the relative errors are less than 5% in most cases of experiments. It reveals that the measurement method is valid in the applications to solve the orientation and the position of an object.

Discussion

According to the analysis above, the mean errors of the 3D line to 2D line method and the point-based method grow with the increasing noises. The mean errors of the 3D line to 2D line method are smaller than the point-based method. In the test without noises, the line to line method achieves the maximum relative error of 7.42% and minimum relative error of 0.68%. The ones of the point-based method are 7.83% and 1.13%, respectively. In the test with the noises, the line to line method provides the maximum relative error of 8.14% and minimum relative error of 0.30%. The ones of the point-based method are 8.17% and 0.55%, respectively. The results show that the 3D line to 2D line method contributes higher noise immunity. Moreover, the errors in the 800 mm measurement distance are smaller than the ones in the 1000 mm measurement distance in the experiments. The data reveal that the two calibration methods provide higher noise immunity in the near-camera measurement. In the example to measure the position and orientation the of the object, the proposed method achieves the error means of 0.18 mm, 0.83 mm, 1.05 mm and 1.78 mm corresponding to the measurement displacements of 10 mm, 20 mm, 30 mm and 40 mm. It indicates that the method is workable and reliable in the measurement applications of the position and orientation.

Methods

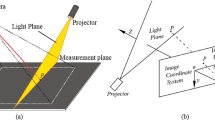

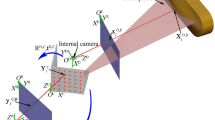

The calibration method is interpreted in the Fig. 8. A 3D line Li on the 3D target is projected to the 2D image plane. The projective line li in the image plane is denoted by

where li = (l1i, l2i, 1)T is the homogenous coordinate of the projected line in the image plane, xj = (x1j, x2j, 1)T is the homogenous coordinate of the points on the projective line li.

The projective plane Qi, which passes through the camera center and the 2D projective line li, is determined by ref. 21

where P = [pmn]3×4 is the projective matrix of the camera. li = [li1, li2, 1]T is the vector of the projective line.

The 3D crossing line between the projective plane Qi and the O-XZ plane of the target can be expressed by the Plücker matrix21 as

where Q1 = [0, 1, 0, 0]T is the vector of the O-XZ plane of the target.

Substituting equation (3) in equation (4), the 3D crossing line is

Equation (5) discloses the relationship between a 3D line  and its 2D projective line li. According to the definitions of Q1, li and P, we have

and its 2D projective line li. According to the definitions of Q1, li and P, we have

In the other way, the dual 3D line of the 3D line  is defined by the Plücker matrix21 as

is defined by the Plücker matrix21 as

where XAi = [xAi, 0, zAi, 1]T, XBi = [xBi, 0, zBi, 1]T are the vectors of 3D points Ai, Bi on the 3D line Li of the target. Then, we have

Considering the relationship between the 3D line  and its dual Li as ref. 22

and its dual Li as ref. 22

The 3D line  can also be derived from

can also be derived from

From equations (6) and (10), we have

where  , p = [p11, p12, p13, p14, p21, p22, p23, p24, p31, p32, p33, p34]T, f1i = [zAi − zBi, xAi − xBi, xBizAi − xAizBi]T.

, p = [p11, p12, p13, p14, p21, p22, p23, p24, p31, p32, p33, p34]T, f1i = [zAi − zBi, xAi − xBi, xBizAi − xAizBi]T.

In a similar way, the Plücker matrices of the 3D lines on the O-YZ plane of the target provide

where  , f2i = [zCi − zDi, yDi − yCi, yCizDi − zCiyDi]T.

, f2i = [zCi − zDi, yDi − yCi, yCizDi − zCiyDi]T.

The stacking of equations (11) and (12) is

where  ,

,  ,

,  ,

,  .

.

The non-homogeneous linear equations are solved by

According to the vector p solved by equation (14), projection matrix P = [pmn]3×4 is the obtained and can be denoted by its decomposition as ref. 21

where A is the intrinsic matrix of the camera, R = [rmn]3×3 is the rotation matrix and t = [tx, ty, tz]T is the translation vector from the world coordinate system defined in the 3D target and the camera coordinate system.

The translation vector t and the rotation matrix R provide the position and orientation of the measured object. Considering the orthogonality of the rotation matrix R and the upper triangular matrix A, the position and orientation are solved by ref. 23

where p4 is the fourth column of the projection matrix P, α, β, γ are the rotation angles about the o-x, o-y, o-z axes of the camera coordinate system, r11, r21, r31, r32, r33 are the corresponding elements in the rotation matrix R = [rmn]3×3.

Additional Information

How to cite this article: Xu, G. et al. Position and orientation measurement adopting camera calibrated by projection geometry of Plücker matrices of three-dimensional lines. Sci. Rep. 7, 44092; doi: 10.1038/srep44092 (2017).

Publisher's note: Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

References

Poulingirard, A. S., Thibault, S. & Laurendeau, D. Influence of camera calibration conditions on the accuracy of 3D reconstruction. Opt. Express 24, 2678–2686 (2016).

Thibault, S., Arfaoui, A. & Desaulniers, P. Cross-diffractive optical elements for wide angle geometric camera calibration. Opt. Lett. 36, 4770–4772 (2011).

Yu, J. et al. Square lattices of quasi-perfect optical vortices generated by two-dimensional encoding continuous-phase gratings. Opt. Lett. 40, 2513–2516 (2015).

Liu, K., Zhou, C., Wang, S., Wei, S. & Fan, X. 3D shape measurement of a ground surface optical element using band-pass random patterns projection. Chin. Opt. Lett. 13, 081101 (2015).

Recur, B. et al. Investigation on reconstruction methods applied to 3D terahertz computed tomography. Opt. Express 19, 5105–5117 (2011).

Wang, M., Cheng, Y., Yang, B., Jin, S. & Su, H. On-orbit calibration approach for optical navigation camera in deep space exploration. Opt. Express 24, 1446–1448 (2013).

Santolaria, J., Aguilar, J. J., Guillomía, D. & Cajal, C. A crenellated-target-based calibration method for laser triangulation sensors integration in articulated measurement arms. Robot CIM-INT Manuf. 27, 282–291 (2011).

Zhang, Z. Y. Camera calibration with one-dimensional objects. IEEE T. Pattern Anal. 26, 892–899 (2004).

Qi, F., Luo, Y. & Hu, D. Camera calibration with one-dimensional objects moving under gravity. Pattern Recogn. 40, 343–345 (2007).

Ricolfeviala, C. & Sanchezsalmeron, A. J. Camera calibration under optimal conditions. Opt. Express 19, 10769–10775 (2011).

Bethea, M. D., Lock, J. A., Merat, F. & Crouser, P. Three-dimensional camera calibration technique for stereo imaging velocimetry experiments. Opt. Eng. 36, 3445–3454 (1997).

Heikkila, J. Geometric camera calibration using circular control points. IEEE T. Pattern Anal. 22, 1066–1077 (2000).

Jia, Z. et al. Improved camera calibration method based on perpendicularity compensation for binocular stereo vision measurement system. Opt. Express 23, 15205–15223 (2015).

Tsai, R. A versatile camera calibration technique for high-accuracy 3D machine vision metrology using off-the-shelf TV cameras and lenses. IEEE T. Autom. Sci. Eng. 3, 323–344 (1987).

Xue, J., Su, X., Xiang, L. & Chen, W. Using concentric circles and wedge grating for camera calibration. Appl. Optics 51, 3811–381 (2012).

Chen, R. et al. Accurate calibration method for camera and projector in fringe patterns measurement system. Appl. Optics 55, 4293–4300 (2016).

Xu, G., Zheng, A., Li, X. & Su, J. A method to calibrate a camera using perpendicularity of 2D lines in the target observations. Sci. Rep. 6, 34591 (2016).

Yilmaztürk, F. Full-automatic self-calibration of color digital cameras using color targets. Opt. Express 19, 18164–18174 (2011).

Fernandes, L. A. F. & Oliveira, M. M. Real-time line detection through an improved Hough transform voting scheme. Pattern Recogn. 41, 299–314 (2008).

Abdel-Aziz, Y. I., Karara, H. M. & Hauck, M. Direct linear transformation from comparator coordinates into object space coordinates in close-range photogrammetry. Photogramm. Eng. Rem. S. 81, 103–107 (2015).

Hartley, R. & Zisserman, A. Multiple view geometry in computer vision (Cambridge university press, 2003).

Faugeras, O., Luong, Q. T. & Papadopoulo, T. The geometry of multiple images: the laws that govern the formation of multiple images of a scene and some of their applications (MIT press, 2004).

Herter, T. & Lott, K. Algorithms for decomposing 3-D orthogonal matrices into primitive rotations. Comput. Graph. 17, 517–527 (1993).

Acknowledgements

This work was sponsored by National Natural Science Foundation of China under Grant No. 51478204, No. 51205164, and Natural Science Foundation of Jilin Province under Grant No. 20170101214JC, No. 20150101027JC.

Author information

Authors and Affiliations

Contributions

G.X. provided the idea, G.X., A.Q.Z. and X.T.L. performed the data analysis, writing and editing of the manuscript, A.Q.Z. and G.X. contributed the program and experiments, J.S. prepared the figures. All authors contributed to the discussions.

Corresponding author

Ethics declarations

Competing interests

The authors declare no competing financial interests.

Rights and permissions

This work is licensed under a Creative Commons Attribution 4.0 International License. The images or other third party material in this article are included in the article’s Creative Commons license, unless indicated otherwise in the credit line; if the material is not included under the Creative Commons license, users will need to obtain permission from the license holder to reproduce the material. To view a copy of this license, visit http://creativecommons.org/licenses/by/4.0/

About this article

Cite this article

Xu, G., Zheng, A., Li, X. et al. Position and orientation measurement adopting camera calibrated by projection geometry of Plücker matrices of three-dimensional lines. Sci Rep 7, 44092 (2017). https://doi.org/10.1038/srep44092

Received:

Accepted:

Published:

DOI: https://doi.org/10.1038/srep44092

This article is cited by

-

Optimization reconstruction method of object profile using flexible laser plane and bi-planar references

Scientific Reports (2018)

-

Full-field 3D shape measurement of discontinuous specular objects by direct phase measuring deflectometry

Scientific Reports (2017)

Comments

By submitting a comment you agree to abide by our Terms and Community Guidelines. If you find something abusive or that does not comply with our terms or guidelines please flag it as inappropriate.